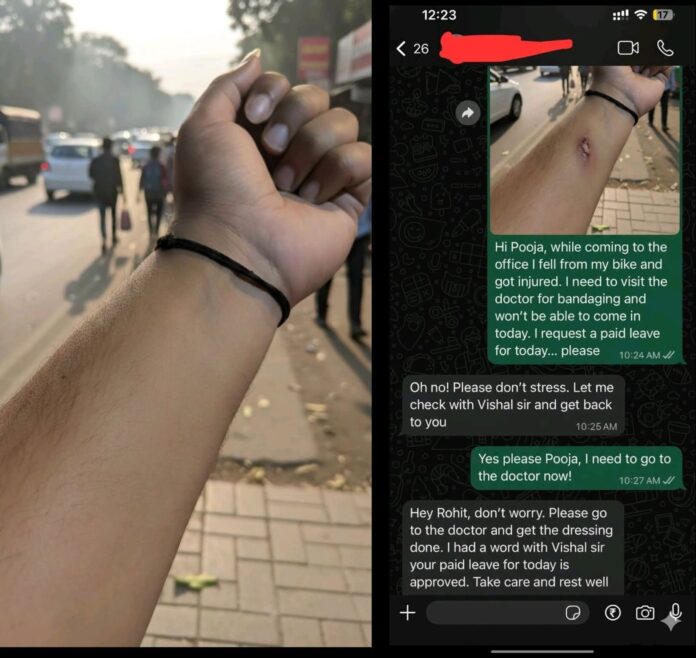

A viral incident involving an Indian office worker has ignited international debate about the growing risks of AI-generated deception. According to screenshots circulating widely on social media, the employee reportedly used Gemini Nano — Google’s on-device generative AI tool — to fabricate a realistic-looking arm injury. He then sent the doctored image to his HR department, claiming he had fallen off his bike on the way to work and needed medical attention.

Within minutes, HR approved his leave.

What might seem like a clever “day-off hack” has triggered broader concerns among cybersecurity experts, HR professionals, and digital-ethics researchers. The incident highlights a truth many have warned about: photographs, once considered reliable proof, are no longer trustworthy in the age of consumer-grade AI.

Gemini Nano, designed to run directly on smartphones, can create or modify images without needing cloud processing. Early reviewers praised its convenience — but also noted its potential for misuse. The viral images show a convincingly rendered abrasion on an otherwise normal photo of an arm, complete with shadows, depth, and inflammation.

“Five years ago, this level of realism required expertise — now it’s a tap away,” said Dr. Kavita Menon, a visual forensics researcher. “We’re heading toward a world where employers, insurers, and institutions can no longer rely on photographic evidence at face value.”

HR professionals say the incident is a wake-up call. Sick-leave verification may require secondary confirmation beyond photos. Remote-work policies based on trust may be tested more frequently. Companies might increasingly adopt AI-detection tools, although these remain imperfect. Several HR consultants also caution that overcorrecting could harm employee trust, noting that the vast majority of workers don’t misuse leave.

Experts warn that the stakes grow dramatically outside workplace pranks. Insurance fraud, legal disputes, and identity verification systems could all be undermined by synthetic visuals. “Visual authenticity is collapsing,” said cybersecurity analyst Omar Reyes. “Institutions built on photographic documentation must evolve quickly — or risk being exploited at scale.”

AI developers, including Google, OpenAI, and others, are already facing scrutiny for not embedding stronger guardrails in consumer tools. Calls are increasing for mandatory watermarking of AI-generated or edited images, improved on-device detection systems, and regulation around misuse of generative AI for fraud.

While social media reacted with predictable humor — “AI just fooled HR 😂” being one of the most shared captions — researchers say the case is emblematic of how AI is reshaping digital trust. As generative models become more powerful and more accessible, experts stress the need for stronger verification frameworks that go beyond images, including metadata checks, secure documentation systems, and behavioral verification.

For now, the incident serves as a reminder: in 2025, seeing is no longer believing.